Differential Privacy | Vibepedia

Differential privacy is a rigorous method for releasing statistical information about datasets while protecting individual data subjects, used in applications…

Contents

Overview

Differential privacy was first introduced by Cynthia Dwork, a researcher at Microsoft, in 2006. Since then, it has been widely adopted by companies like Google, which uses differential privacy to collect data for its Maps and Search services, and Apple, which uses it to improve the privacy of its Siri and iCloud services. The concept of differential privacy is closely related to other privacy-enhancing technologies, such as homomorphic encryption, developed by companies like Microsoft and IBM, and secure multi-party computation, used by companies like Google and Facebook.

🔒 How It Works

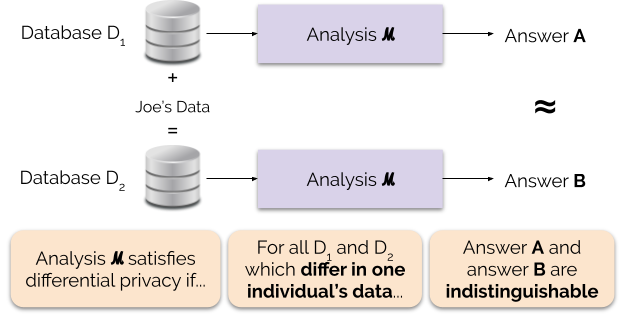

The core idea behind differential privacy is to add noise to statistical computations, making it difficult for an attacker to infer individual data points. This is achieved through the use of algorithms like the Laplace mechanism, developed by researchers like Frank McSherry and Kunal Talwar, and the exponential mechanism, developed by researchers like Cynthia Dwork and Aaron Roth. These algorithms have been implemented in various programming languages, including Python, R, and Julia, and are used by data scientists and analysts at companies like Netflix and LinkedIn.

🌐 Applications & Implementations

Differential privacy has a wide range of applications, from data mining and machine learning to statistical analysis and survey research. For example, the US Census Bureau uses differential privacy to publish demographic information while ensuring the confidentiality of survey responses. Similarly, companies like Uber and Lyft use differential privacy to collect data on user behavior while protecting individual privacy. Researchers like Latanya Sweeney and Kobbi Nissim have also explored the use of differential privacy in healthcare and social sciences, working with organizations like the National Institutes of Health and the World Health Organization.

🔮 Future & Challenges

As the field of differential privacy continues to evolve, new challenges and opportunities are emerging. For example, the use of differential privacy in machine learning and artificial intelligence raises important questions about the trade-off between privacy and accuracy. Researchers like Michael Kearns and Aaron Roth are exploring new algorithms and techniques for differential privacy, while companies like Google and Facebook are developing new applications and implementations. The future of differential privacy will likely involve the development of new technologies and techniques, such as federated learning and secure computation, and the integration of differential privacy with other privacy-enhancing technologies, like blockchain and homomorphic encryption.

Key Facts

- Year

- 2006

- Origin

- Microsoft Research

- Category

- technology

- Type

- concept

Frequently Asked Questions

What is differential privacy?

Differential privacy is a mathematical framework for releasing statistical information about datasets while protecting individual data subjects.

How does differential privacy work?

Differential privacy works by adding noise to statistical computations, making it difficult for an attacker to infer individual data points.

What are the applications of differential privacy?

Differential privacy has a wide range of applications, from data mining and machine learning to statistical analysis and survey research.

Who are the key researchers in differential privacy?

Key researchers in differential privacy include Cynthia Dwork, Latanya Sweeney, and Frank McSherry.

What are the challenges and limitations of differential privacy?

The challenges and limitations of differential privacy include the trade-off between privacy and accuracy, and the difficulty of implementing differential privacy in practice.